360 Video & WebVR | Blender/FrameVR

360 Video

360 video can be used for a variety of applications, from storytelling to emulations of real life scenarios in training for emergency services. The promise of virtual reality in this regard is that the experience is more immersive than that of traditional mediums, which brings about a unique set of challenges when developing an experience. Attention has to be paid to how much control a user has, the physiological effects on the user such as motion sickness, and the way in which a narrative is conveyed in order to keep the attention of a user.

Lab Work:

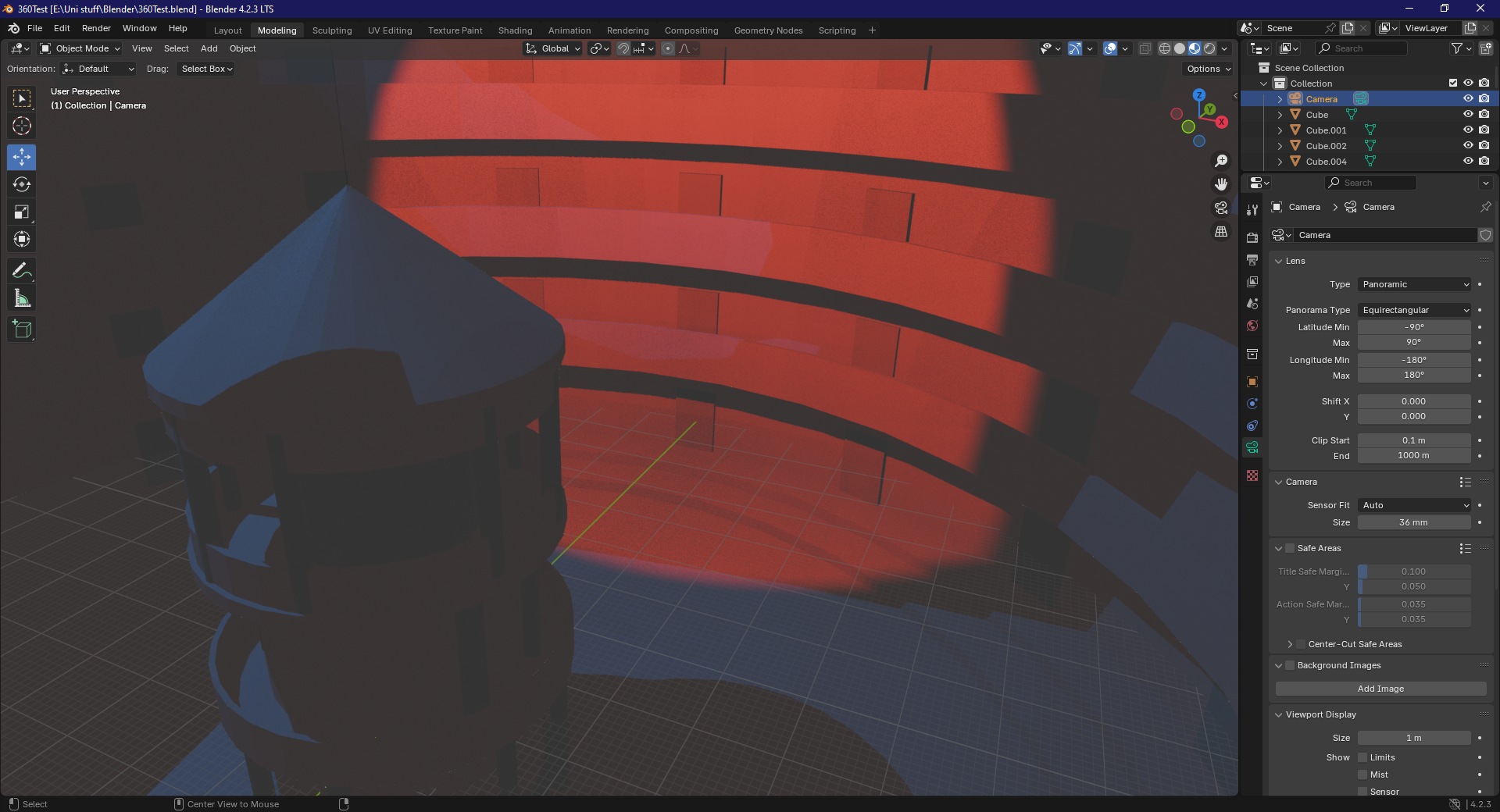

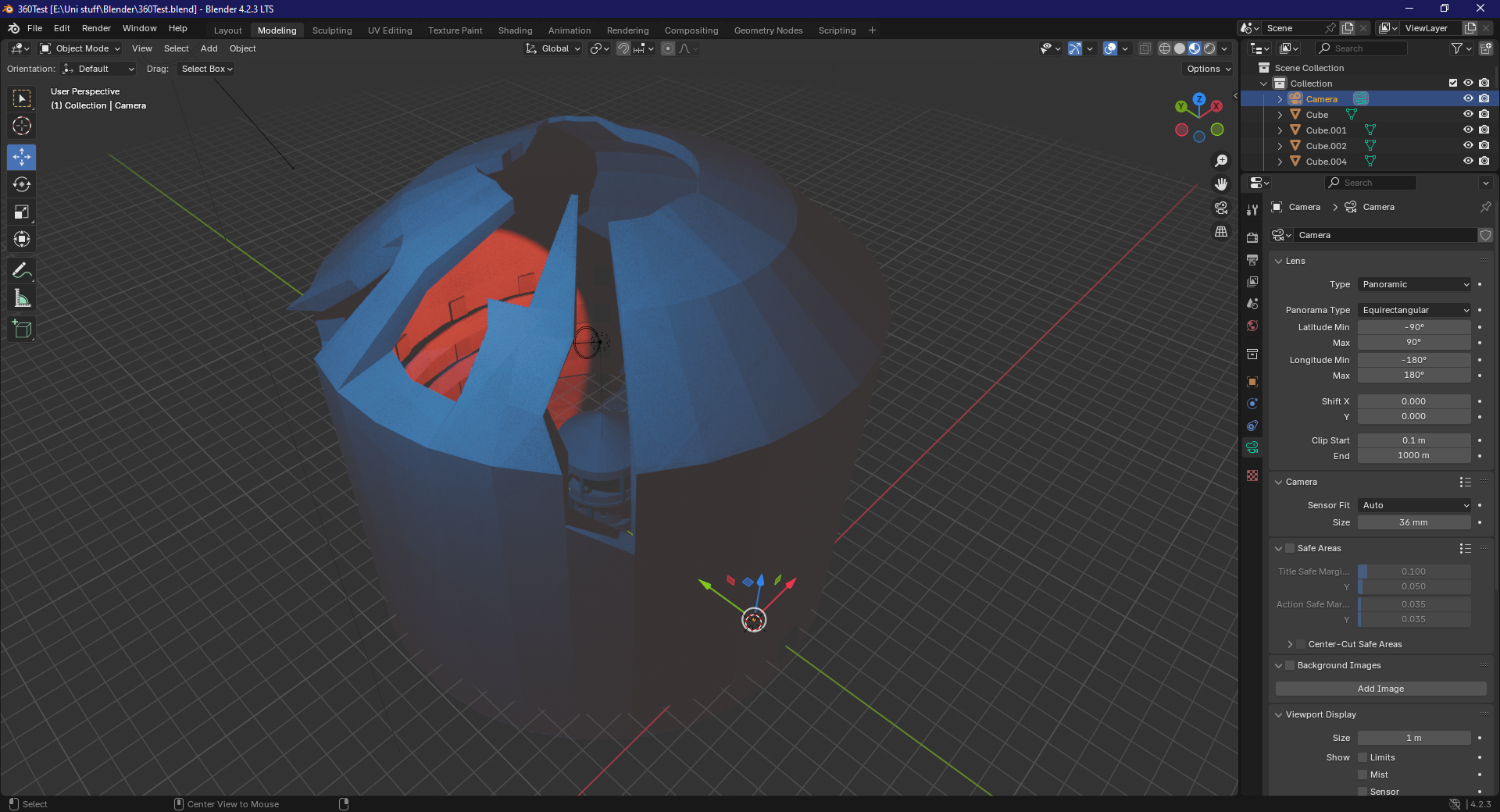

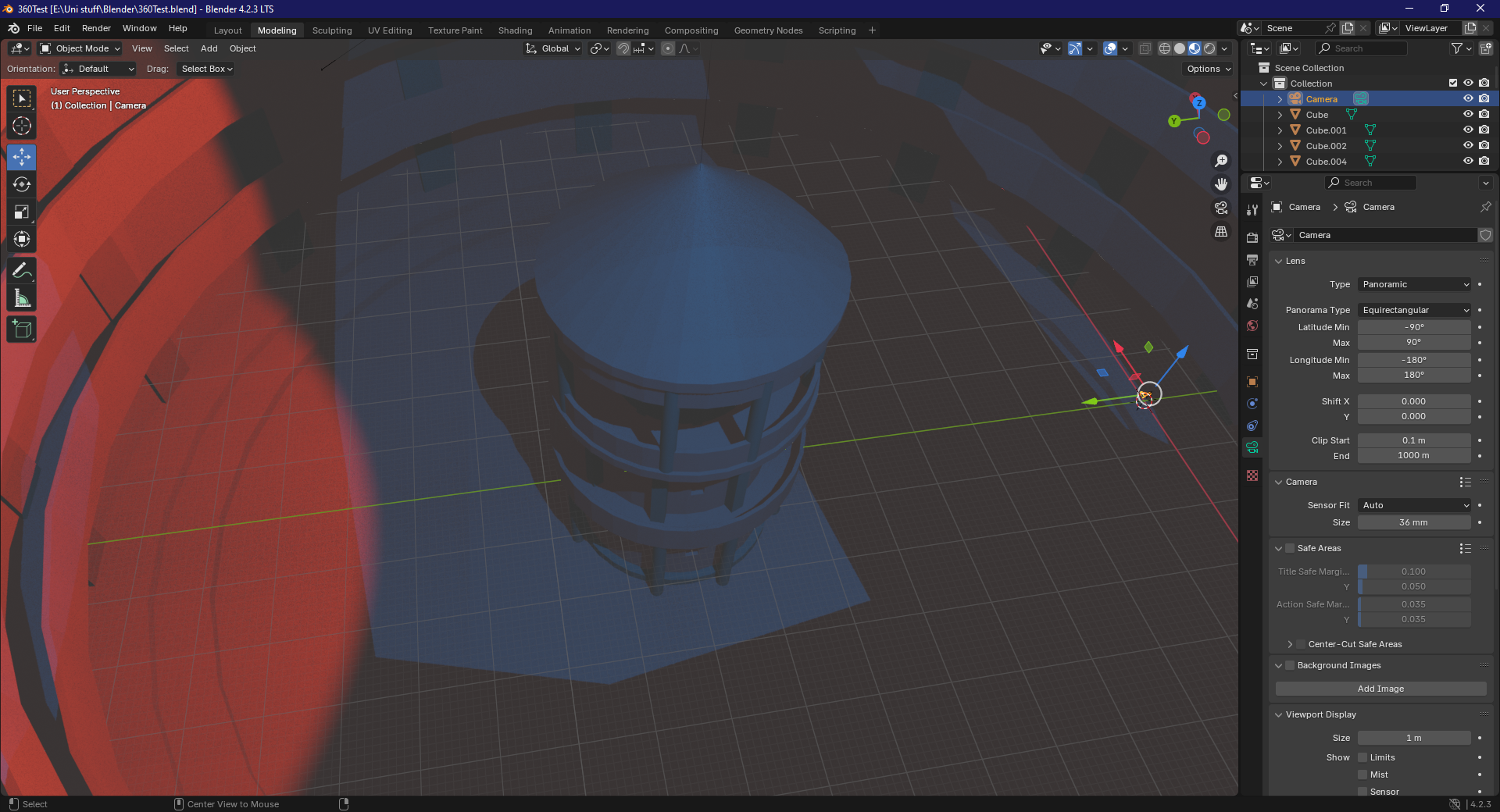

360 Videos in Blender:

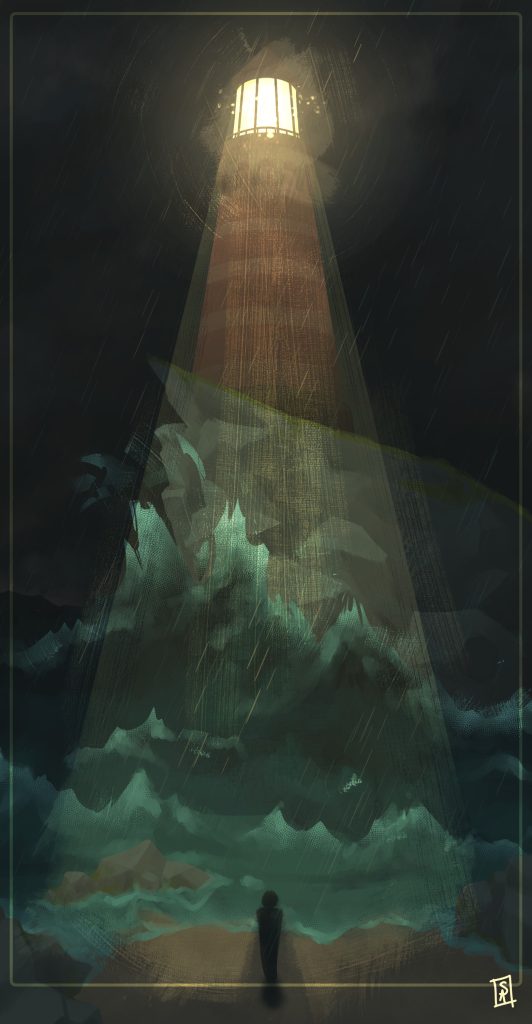

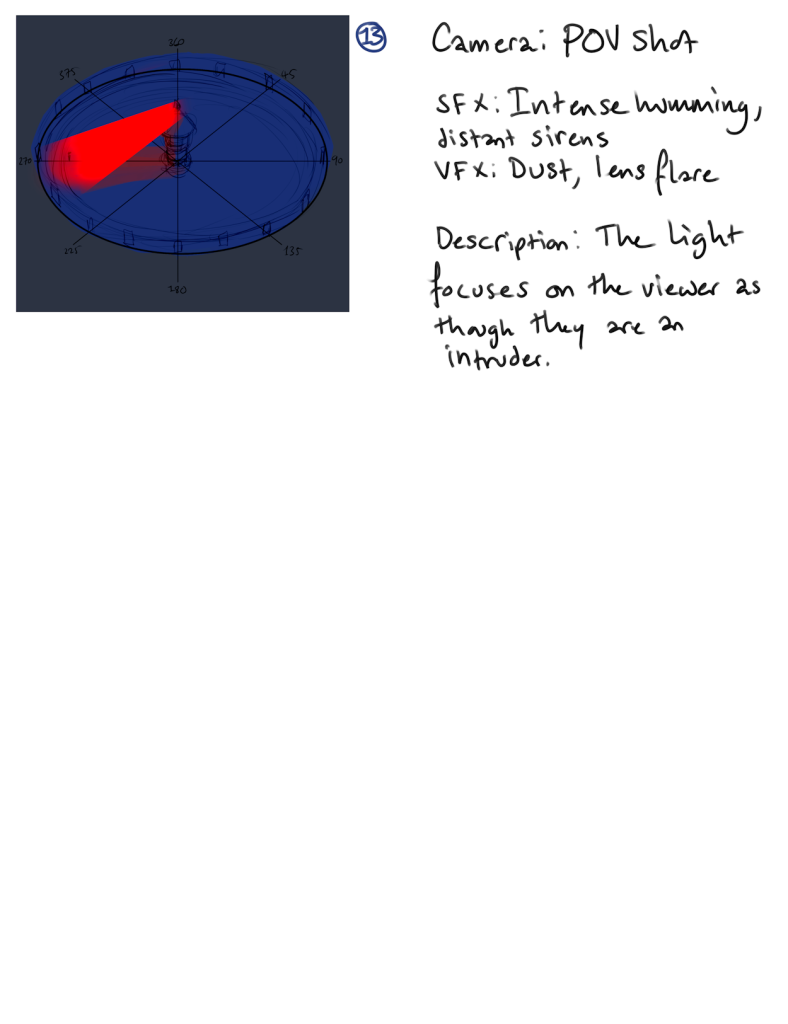

The initial idea for the prototype was to recreate a dream I had involving a lighthouse on a stormy beach, which I had painted an art piece based on. The main set piece would be that the searchlight would pan towards the viewer and focus on them.

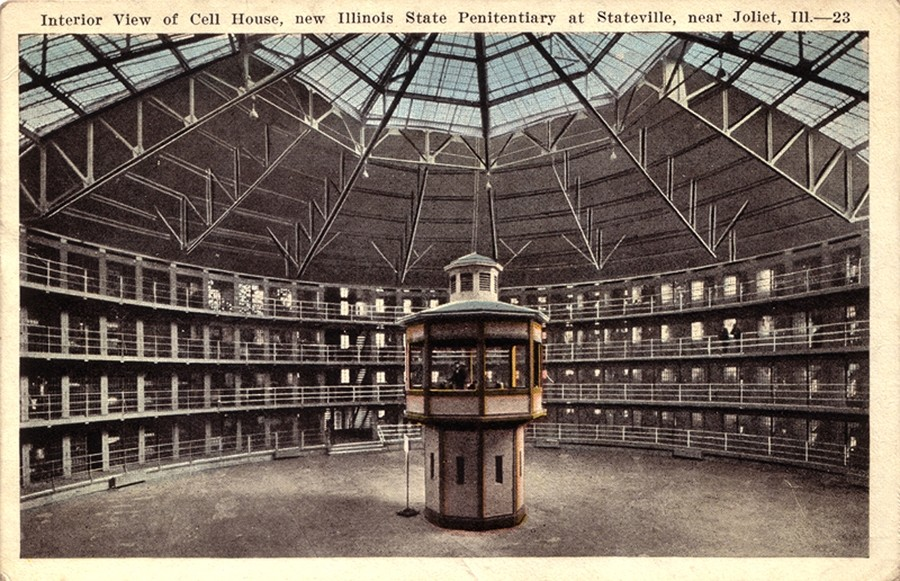

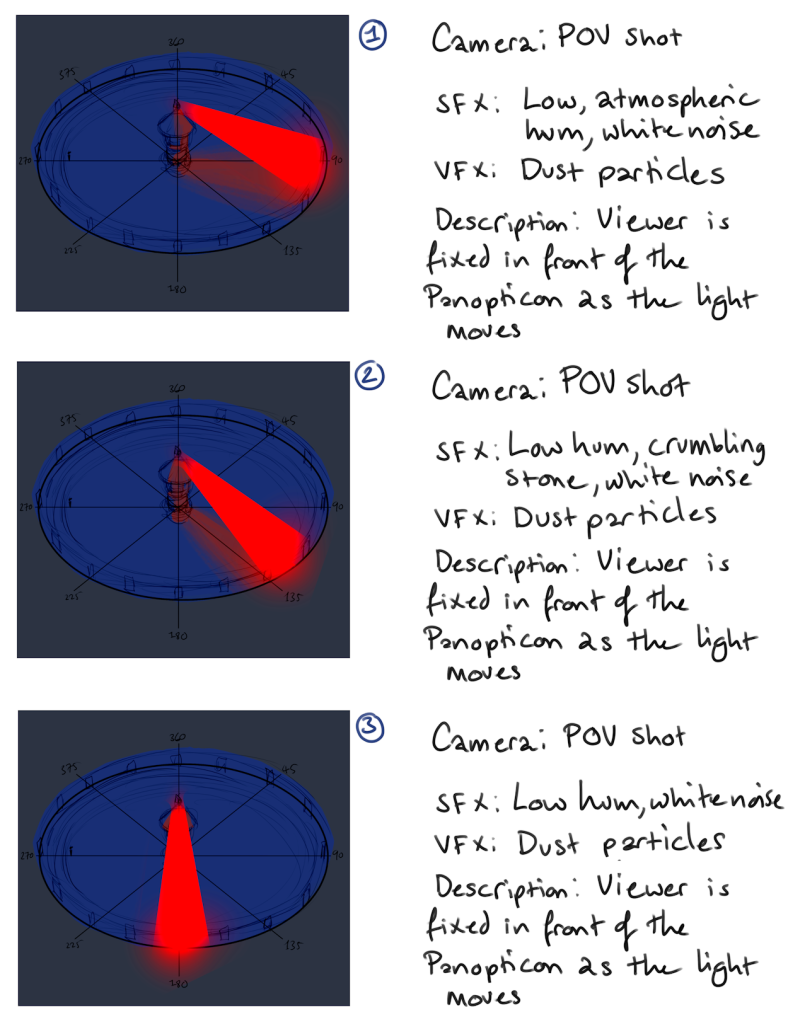

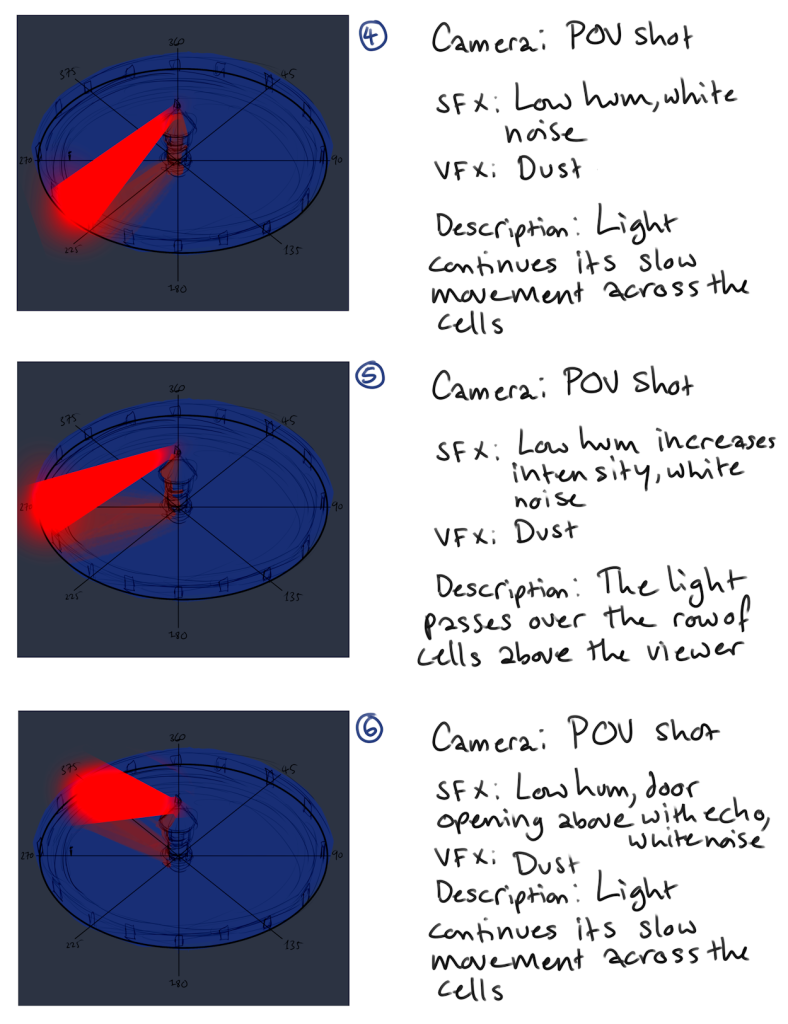

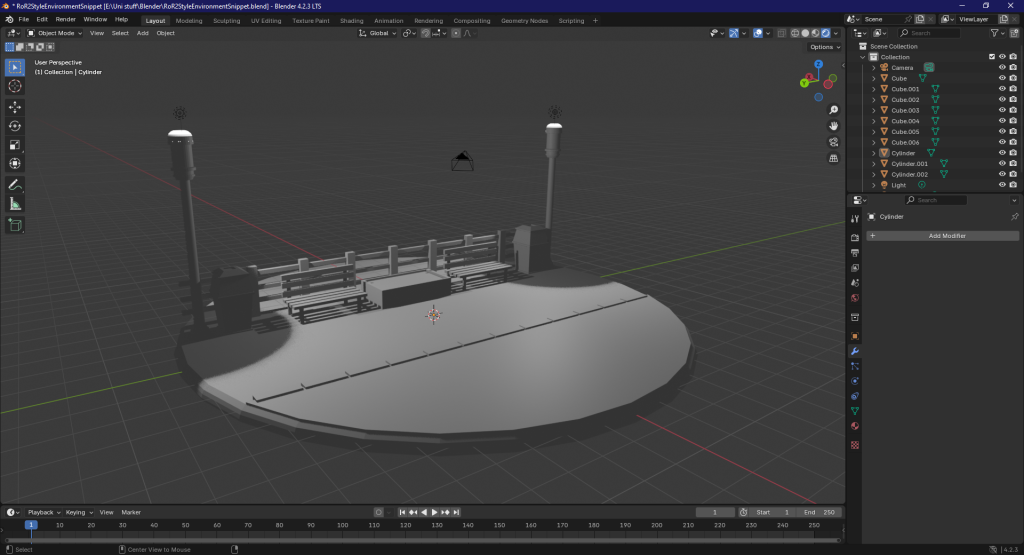

For ease of environment blockout when testing, however, I chose instead to make a panopticon, which is a form of prison with a guard tower in the middle of a ring of cells, so that the searchlight still made sense.

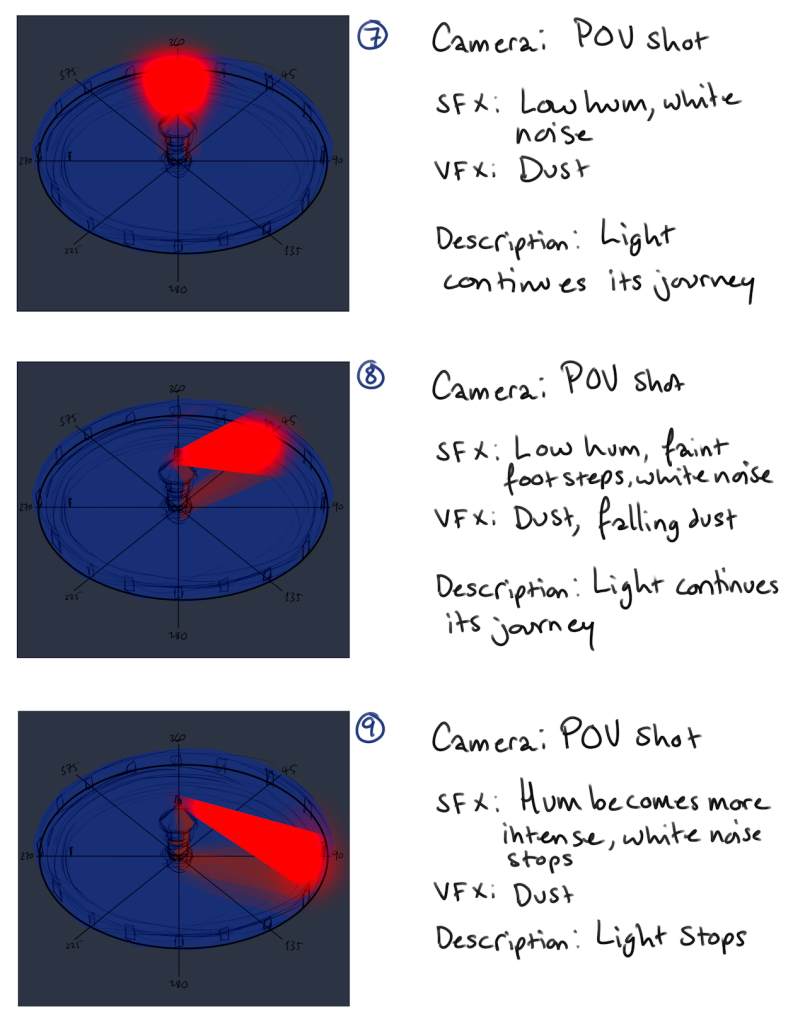

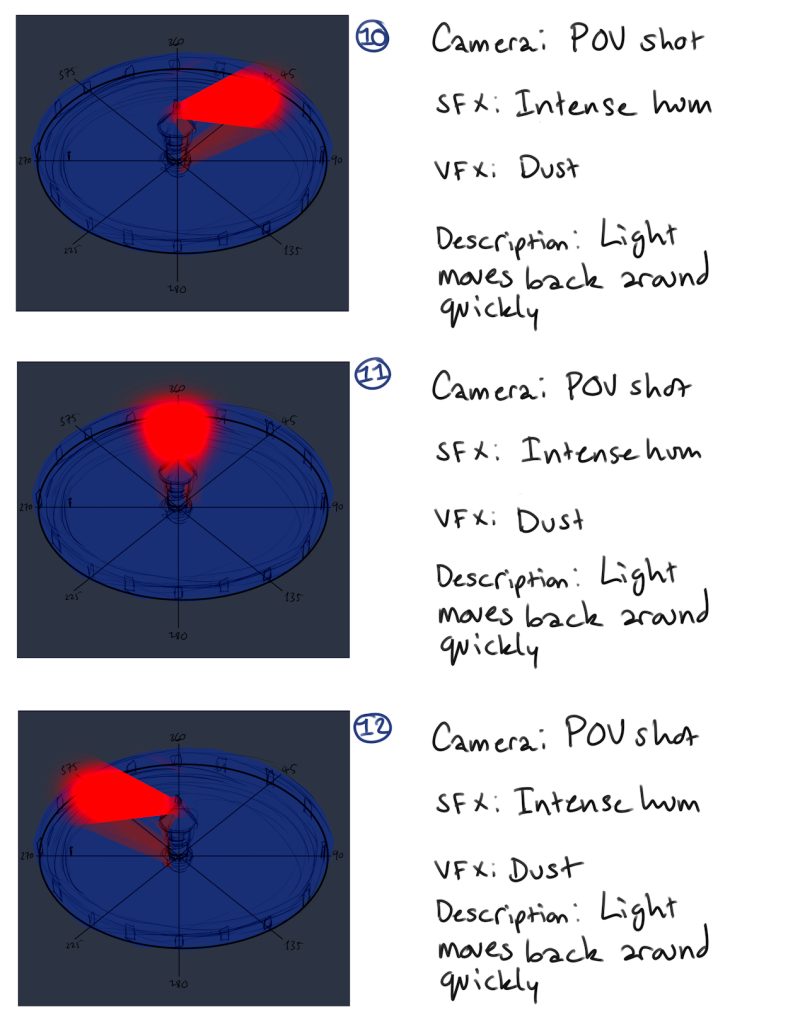

I also made use of a 360 storyboard to plan the small series of events for the prototype, as well as plan out what VFX and sound could be added in the event that this prototype was brought forward into a finished product.

With this enclosed environment, a developed version of this scene would allow me to better consider what the viewer needs to be focused on. It would be important to make sure that any objects that I wanted the user to look at stood out because in a 360 environment, users are more likely to be distracted, wanting to take in everything that they can see for fear of missing out on something important.

For the prototype, I used the contrast in colour between the light and the environment to draw the eye towards the light as it moved around the space, as that was the most important thing in the scene.

In a polished version of this, I would also add a physical searchlight with an emissive texture so more attention could be drawn to it. I would also use this light to focus on other parts of the environment to highlight additional set pieces that could happen around the viewer, such as doors opening, or more of the unstable ceiling crumbling.

I would also have to consider, in an experience that is planned to be unsettling, how a user might react, and the limits of what is comfortable. In VR, the user needs to be in control at all times, since they are shutting themselves off from the real world. For horror content such as what this is planned to be, I would consider warnings before the experience, and at all times have the ability for the user to pause and exit if need be.

This isn’t something exclusive to horror content; the user needs to feel safe in VR, and feel safe leaving VR in the event of discomfort, since the transition between virtual spaces and real spaces can be jarring, particularly for new users. Addressing this, I would have fade-to-black transitions when a user wanted to go back to a main menu, something which would also block off the horror experience from the viewer so that they know that they are safe, and another transition when exiting the experience.

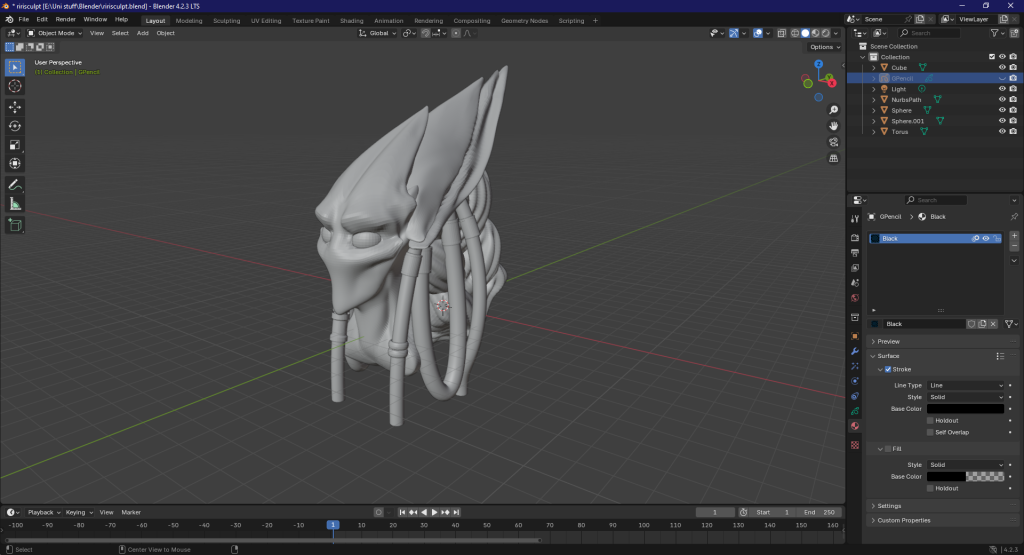

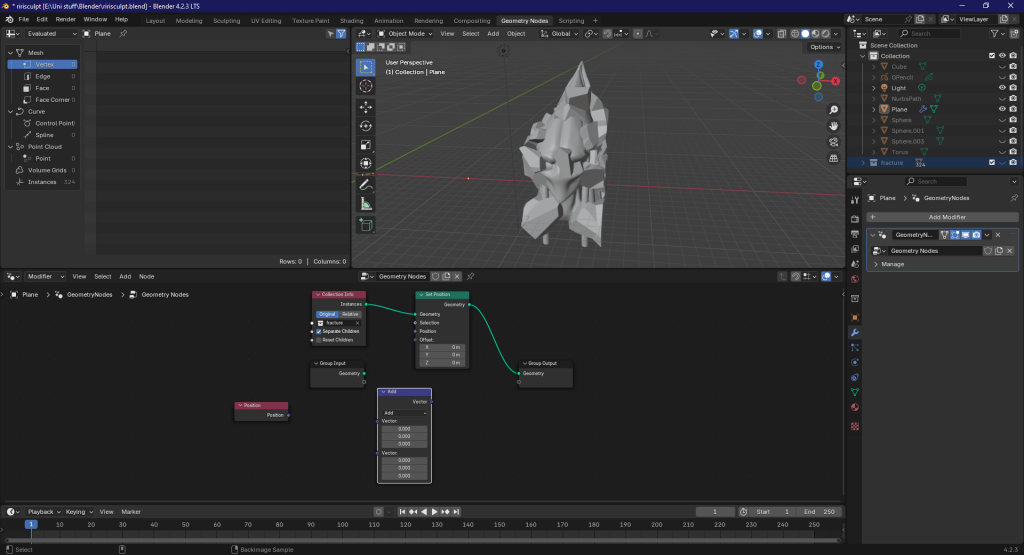

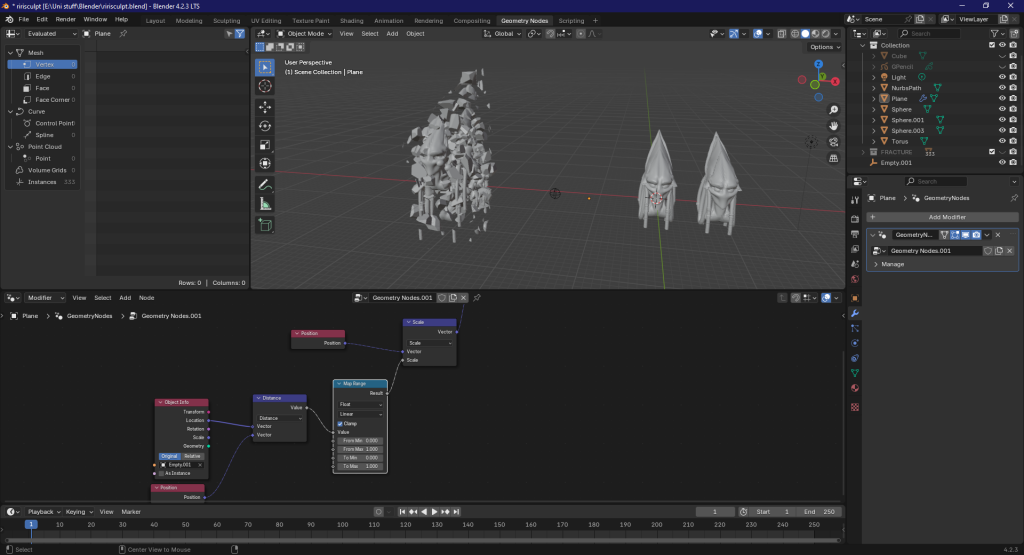

Geometry Nodes:

As part of what I could implement into a 360 video using Blender, I was given tutorials on the Geometry Nodes functions, which are similar to Maya’s MASH:

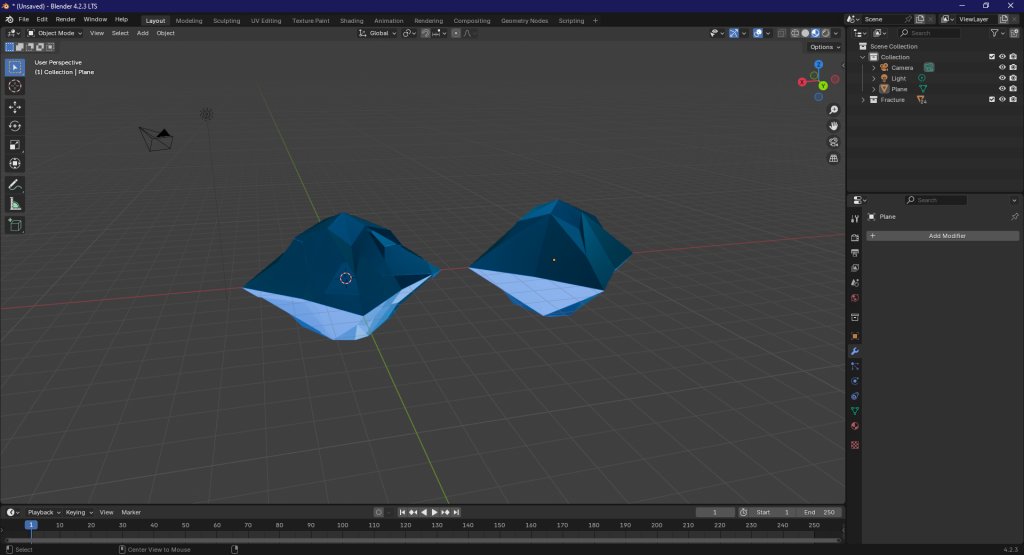

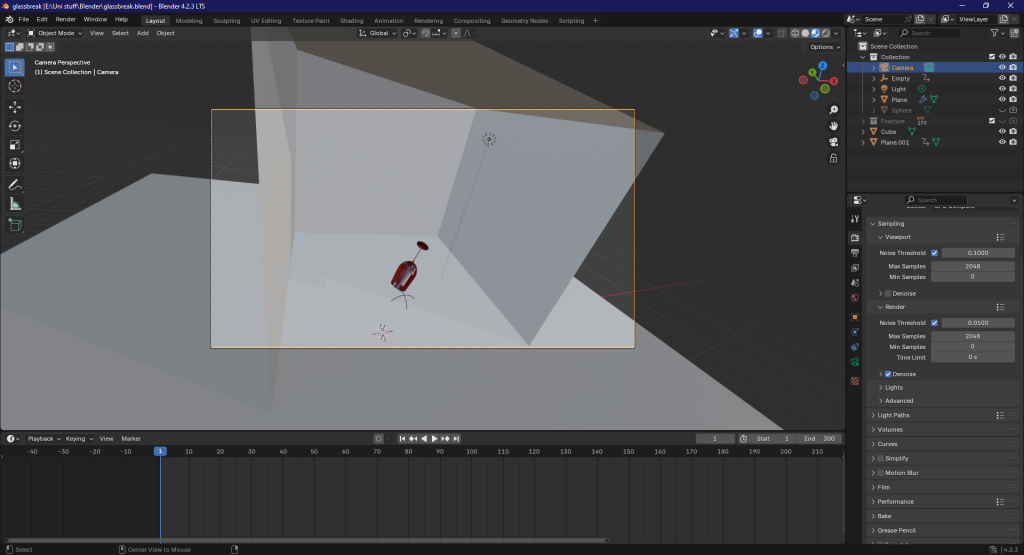

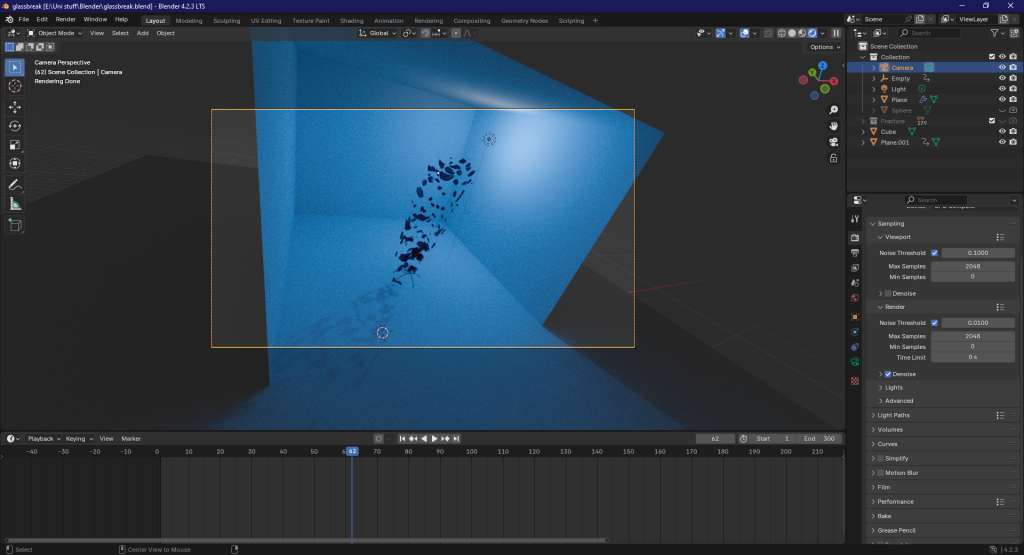

Initially, I tried to sculpt one on my own characters to follow the tutorial with, but the geometry wasn’t clean enough for the nodes to function correctly, so I made a simple gemstone, and a glass, animating both to shatter.

This type of effect could be used in the previous panopticon scene. I mentioned a crumbling ceiling, and I could use this add-on to create that set piece, along with appropriate sound effects.

After playing with the cell fracture add-on, I moved on to audio visualisation, creating a quick test using this tutorial:

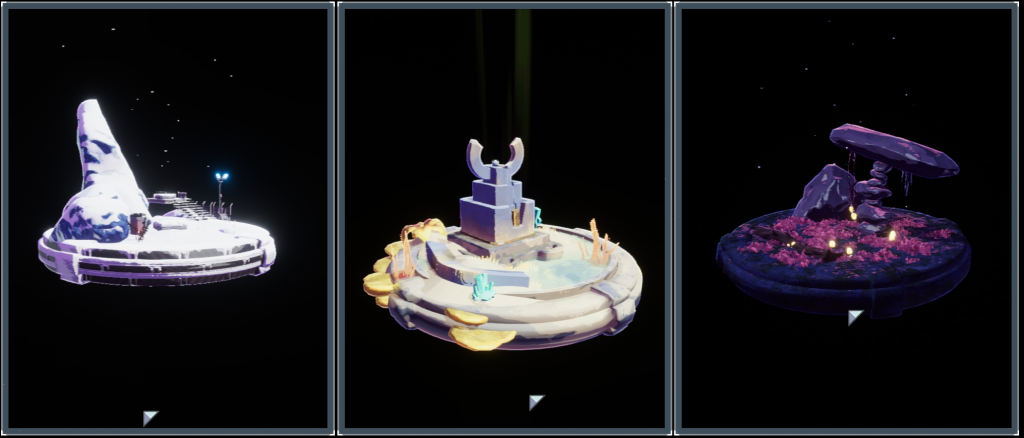

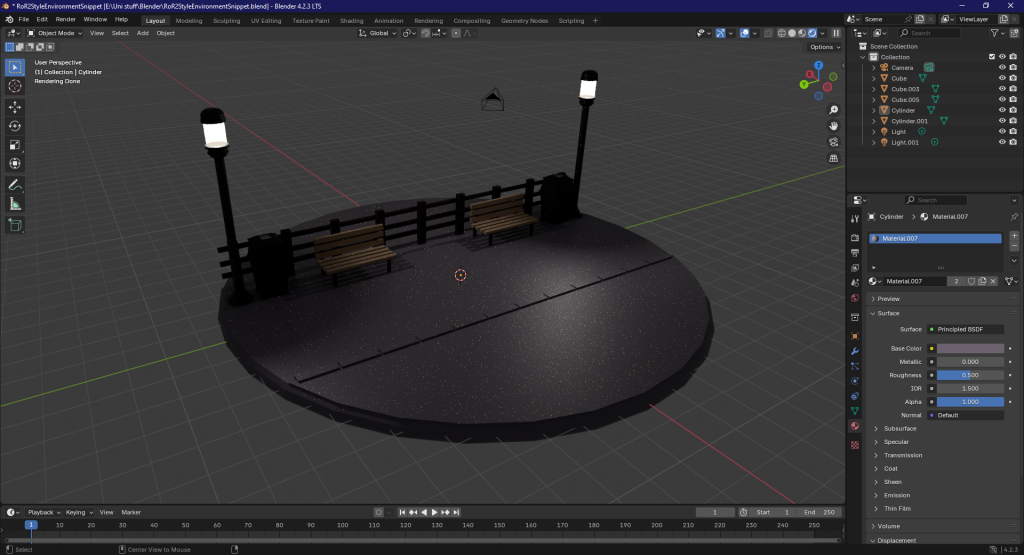

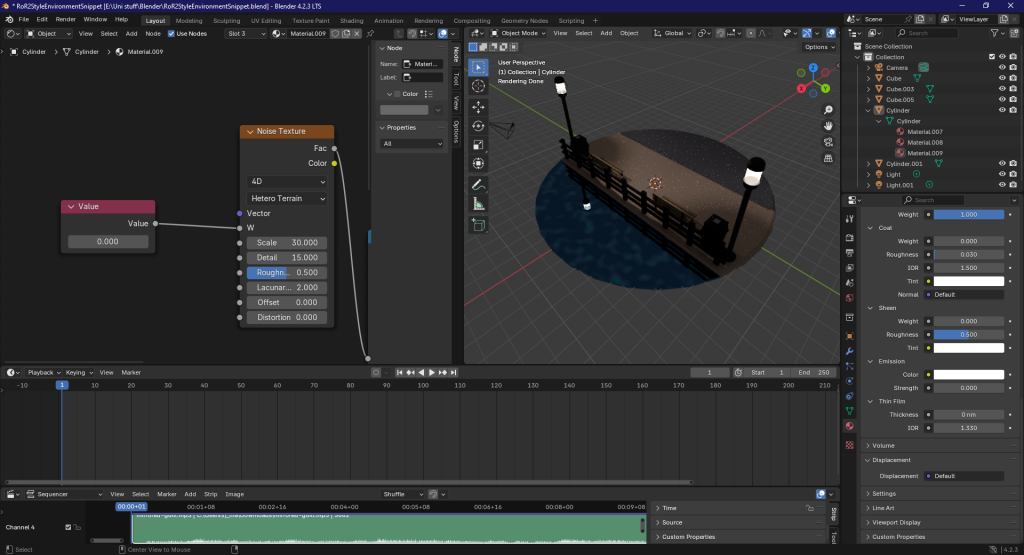

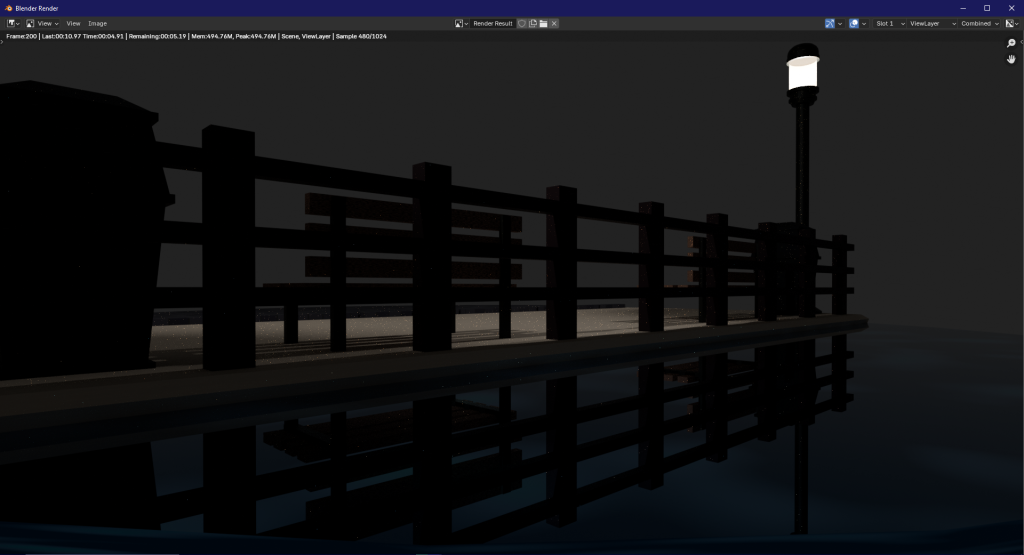

This effect uses the waveform of the music to edit the value of a chosen part of the geometry, but it wasn’t quite what I was looking for. I decided instead to try this method on materials to create the illusion that water was moving with the music, creating a small scene inspired by a mix of the environment previews in Risk of Rain 2 and the area that James first meets Maria in Silent Hill 2.

The original sequence made the texture move a lot faster than I wanted, so I slowed the output frames in Premier Pro, but that makes it look choppy. If I attempt to utilise this in a 360 video, I will be looking into how I can use nodes to better control the way that something like this would look, because flashing textures or lights can induce seizures even in 2D experiences. In VR, the user is closed off from the real world via the headset, and so it is doubly important that photosensitive triggers be considered when there is a chance that a seizure could lead to a more serious injury.

Frame VR

Frame VR is a web-based VR application that lets users create virtual worlds. As it is web-based, apps are not needed, and this makes it accessible to a wide variety of different VR headsets as a result, as well as desktop users.

Applications like Frame VR are primarily used to create social spaces that users can hang out in. Creators can add assets to a set of pre-made worlds, from artwork to 3D models, and create interactive experiences for groups of people to join into and enjoy.

Lab Work:

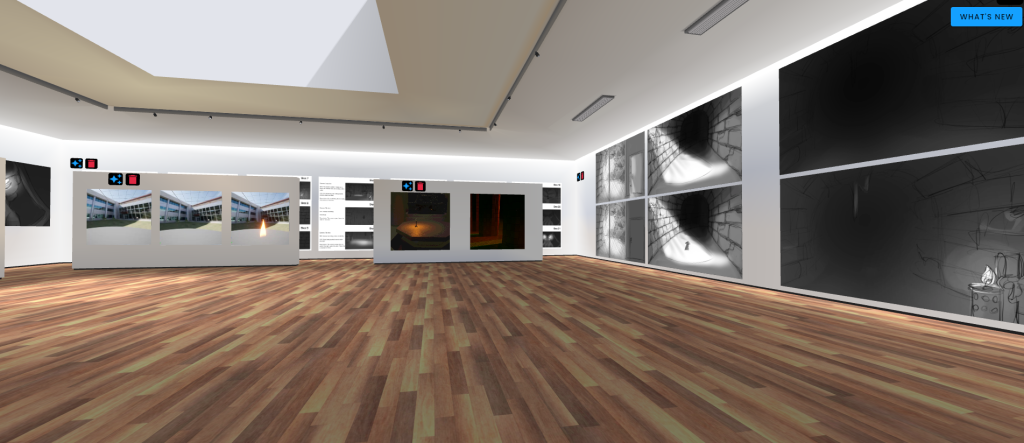

Using artwork I created for my VFX project, alongside the full video, I created a portfolio gallery in one of these environments.

I used the web application through the PC rather than using a VR headset. Likely because of this fact, the controls were a little difficult to get used to. It functions in a similar way to a game engine’s level building tools, though the controls to change the camera view often interfered with moving media around.

From looking at the tutorials FrameVR provides, creating spaces with a headset is more intuitively designed; had I accessed the website through VR, I likely would have enjoyed putting together the space.

As an aside, because it was a web application, having many videos automatically play when a user loaded in caused poor performance, meaning that the supposed accessibility was undercut by user hardware and the amount of content a creator put into the provided virtual spaces. While automatic video is a toggle, it is on by default, and not immediately apparent within the menus.

Loading times and framerate can cause motion sickness in a VR experience and crashing is a jarring transition for the user that should be avoided; the user needs to feel secure when in a virtual space. If I were to create a gallery with more media to show, I would make sure to avoid large resolution images, and keep GIFS and videos to a minimum with the automatic play toggled off so that a user has more time to generate the world, and can control what they see and when.

This type of application could be used to show off various portfolio pieces, but traditional 2D websites such as ArtStation are still more accessible in that regard, and don’t have the added risk of being unable to host large amounts of files.

Concepts:

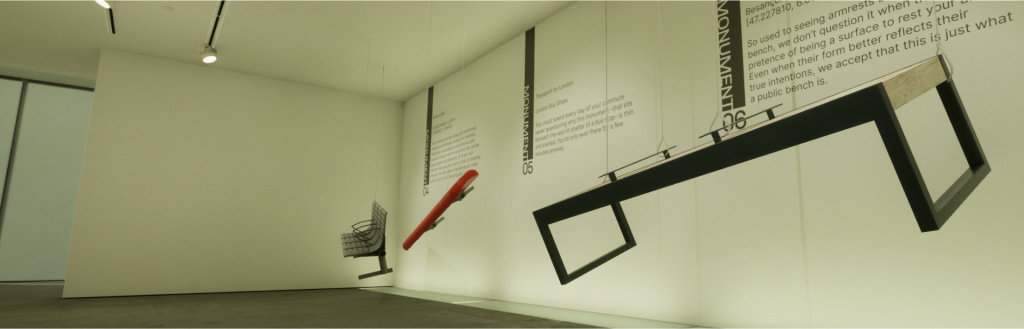

While I didn’t enjoy using Frame VR to create a portfolio space, it did give me the idea to possibly use Blender to make a 360 video of a museum tour about one particular subject, similar to the experience ‘Monuments to Guilt’ by a creator called Louis. This experience takes the player through a museum full of examples of hostile design; benches that are designed to be uncomfortable to deter homeless people sleeping on them, and as a result making everyone who might need to sit in a public space uncomfortable.

Another use for VR would be to create small, customisable sensory spaces for meditation. A user would be able to control the lighting, surroundings, and sounds through menus, or, instead of customisation, the user could choose between a few pre-made rooms to relax in. These rooms would have different themes and ambient sounds that are proven to be calming for people with sensory issues. Research would have to be done on what types of sounds and visuals should be avoided, as well as which combinations would be best suited, since there is no one person with sensory issues that is the same. I could make use of Blender’s geometry nodes to create synced set pieces with the audio in each of the rooms.

References:

3DDrip Studio (2023). Shatter and Destroy Objects with Geometry Nodes and Cell Fracture Add-On in Blender | Tutorial. [online] YouTube. Available at: https://www.youtube.com/watch?v=4cE0rmBcgxE [Accessed 3 Oct. 2024].

Dalangin, M.A. (2021). Applying UX in VR (Virtual Reality) | Userpeek.com. [online] Userpeek.com. Available at: https://userpeek.com/blog/applying-ux-in-vr-virtual-reality/ [Accessed 13 Oct. 2024].

Frame (2022). Frame: Virtual Reality Mode. [online] YouTube. Available at: https://www.youtube.com/watch?v=CG1D7Ek09LQ [Accessed 29 Oct. 2024].

HEALTH (2024). HEALTH :: (OF ALL ELSE). [online] YouTube. Available at: https://www.youtube.com/watch?v=G5I8RlCSQ5o [Accessed 10 Oct. 2024].

Khurana, K. (2023). Role of UX in Virtual Reality – Procreator Blog. [online] ProCreator Blog: Design, Technology, Innovation. Available at: https://procreator.design/blog/user-experience-ux-in-virtual-reality/ [Accessed 20 Oct. 2024].

Lacy, L. (2017). Google: Here’s how to use VR to tell better stories. [online] The Drum. Available at: https://www.thedrum.com/news/2017/06/28/google-here-s-how-use-vr-tell-better-stories [Accessed 13 Oct. 2024].

Louis (2023). Monuments To Guilt by louis. [online] itch.io. Available at: https://louisthings.itch.io/monuments-to-guilt [Accessed 26 Oct. 2024].

Luca Onniboni (2022). The Panopticon in the French prison of Auntun | Archiobjects. [online] Archiobjects. Available at: https://www.archiobjects.org/the-panopticon-in-the-french-prison-of-auntun/ [Accessed 10 Oct. 2024].

Merkle, C. (2017). Storyboarding in 360°: A Case Study. [online] CinematicVR. Available at: https://medium.com/cinematicvr/storyboarding-in-360-2ddce59d627d [Accessed 26 Oct. 2024].

Nik Kottmann (2022). Easy MUSIC VISUALIZER in Blender! [online] YouTube. Available at: https://www.youtube.com/watch?v=f4I3O85STFY [Accessed 3 Oct. 2024].

Olsen, D. (2023). The Future is a Dead Mall – Decentraland and the Metaverse. [online] www.youtube.com. Available at: https://www.youtube.com/watch?v=EiZhdpLXZ8Q [Accessed 20 Aug. 2023].

Pebble. (2017). 360 Virtual Reality | 360 vs VR | Blog | Pebble Studios. [online] Available at: https://pebblestudios.co.uk/2017/03/360-virtual-reality-demystifying-differences-between-360-vr/ [Accessed 20 Oct. 2024].

PIXXO 3D (2022). Tutorial: Beginners Head Sculpt | EASY In Blender. [online] www.youtube.com. Available at: https://www.youtube.com/watch?v=SVf-UvySGqI [Accessed 20 Oct. 2024].

Renshaw, P. (2021). Looking Back to 2001 and the Emotional Horror of Silent Hill 2 | TheXboxHub. [online] TheXboxHub. Available at: https://www.thexboxhub.com/looking-back-to-2001-and-the-emotional-horror-of-silent-hill-2/ [Accessed 25 Oct. 2024].

Shahrooz Shekaraubi (2023). Navigating the Transition from 2D to 3D UI/UX Design in VR. [online] Medium. Available at: https://medium.com/@ShahroozShekaraubi/navigating-the-transition-from-2d-to-3d-ui-ux-design-in-vr-771dc756ba3a [Accessed 20 Oct. 2024].

Sheldon, S. (2016). Social Media: The Modern Panopticon. [online] www.linkedin.com. Available at: https://www.linkedin.com/pulse/social-media-modern-panopticon-stuart-sheldon [Accessed 10 Oct. 2024].

TaintedTownMusic (2012). Mirrored Guilt. [online] YouTube. Available at: https://www.youtube.com/watch?v=BMkLwDBY0e0 [Accessed 10 Oct. 2024].

Thompson, S. (2020). Motion Sickness in VR: Why it happens and how to minimise it. [online] virtualspeech.com. Available at: https://virtualspeech.com/blog/motion-sickness-vr [Accessed 1 Nov. 2024].

Watson, Z., Crago, A., Nicholls, K., Beckett, S., Lammiman, D. and Hill, N. (2019). Making VR a Reality. [online] Available at: http://downloads.bbc.co.uk/aboutthebbc/internetblog/bbc_vr_storytelling.pdf [Accessed 26 Oct. 2024].