AR | Zapworks/Adobe Aero

Marker-based/Markerless/Location-based AR

Marker-based AR technology uses an anchor, which is a specific pattern or image detected through the device’s camera, in order to trigger the experience that has been created.

This type of technology has a wide variety of uses. In industry, for example, AR can be used by utilising existing CAD data of the make and model of a car, and be overlayed onto that specific car, using it as an anchor point, so that workers can be trained in mechanical maintenance of the vehicle. This same method can be used for remote maintenance, where a person who does not know anything about cars can follow detailed instructions given by the AR programme.

Markerless AR makes use of the dimensions of a room in order to decide where objects should be placed, and how large they should be in that space.

The app IKEA Place uses this type of augmented reality technology, allowing users to put furniture in their home before they decide to buy it so that they can be sure it is the right choice for them.

AR can also make use of a GPS information in order to have objects appear. Games like Pokemon GO and apps like Google Maps use the location information of the device in order to show directions or trigger an event, such as a Pokemon appearing.

With this type of technology, it is important to consider the safety aspects. AR does require that a phone’s camera be trained on the marker, location, or room at all times while the experience is happening, and that can be an issue as the user may not be paying attention to obstacles and dangers around them. To mitigate this, games that use this technology, such as the previously mentioned Pokemon GO, give warnings in loading screens for users to pay attention, and warnings not to trespass on private land, which was a huge issue when the game was first released.

In cases where AR is being used indoors, obstacles in a room still have to be considered; a 3D model shouldn’t obscure trip hazards as a user is walking around it, for example. In these cases, a 3D asset in an augmented space should occlude the furniture or anything else that could get in the way.

Lab Work:

Zapworks:

Zapworks is a web-based AR application. It uses QR codes to activate markers, which are typically images, in order to show the content that a creator has made in the real-world space through the camera of a phone or tablet.

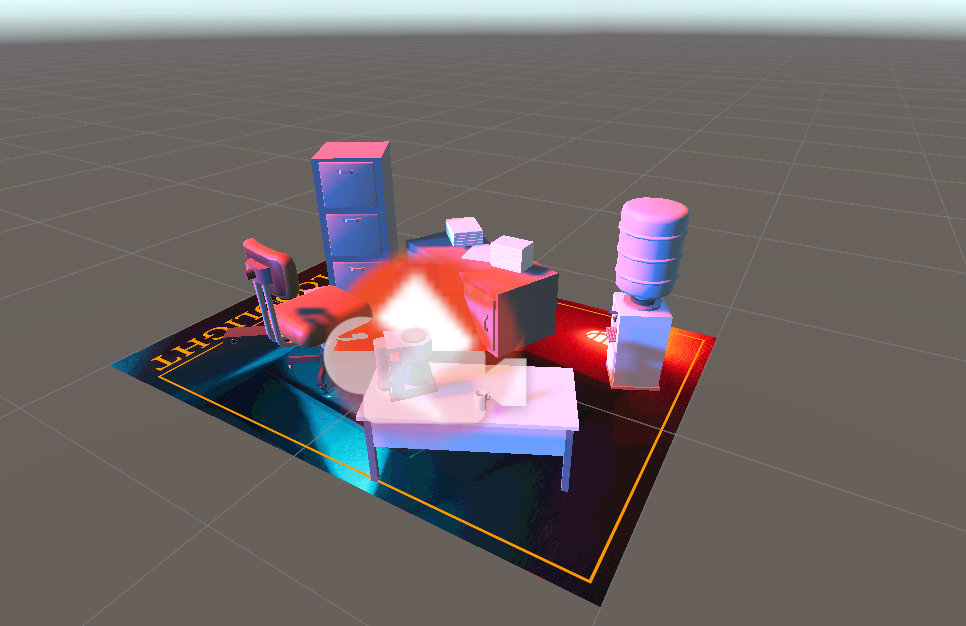

Using Unity, I began initially by using the provided assets and tutorial, one static, and the other animated:

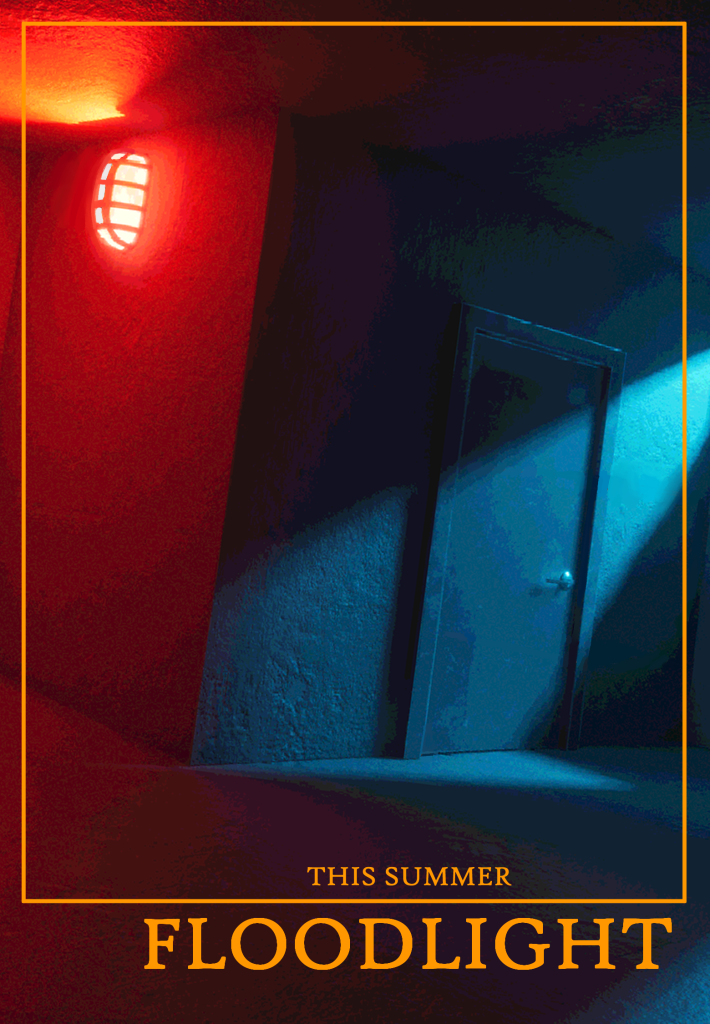

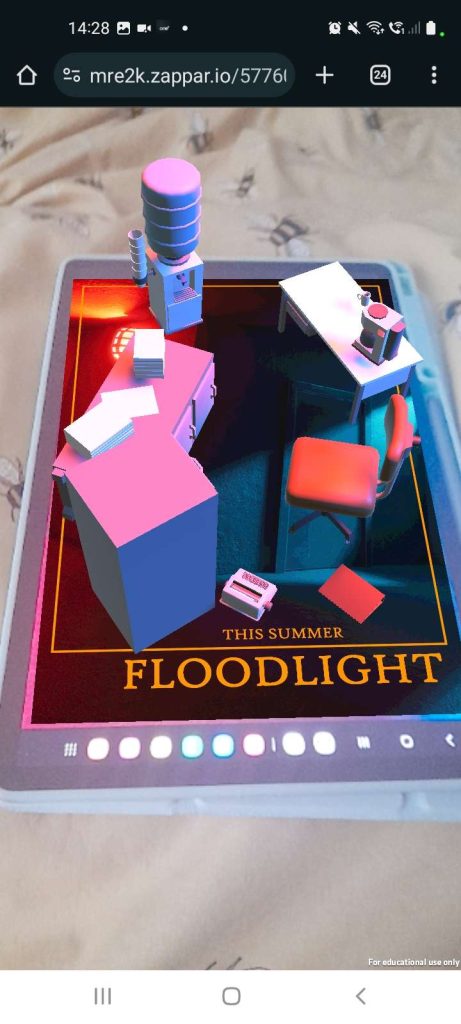

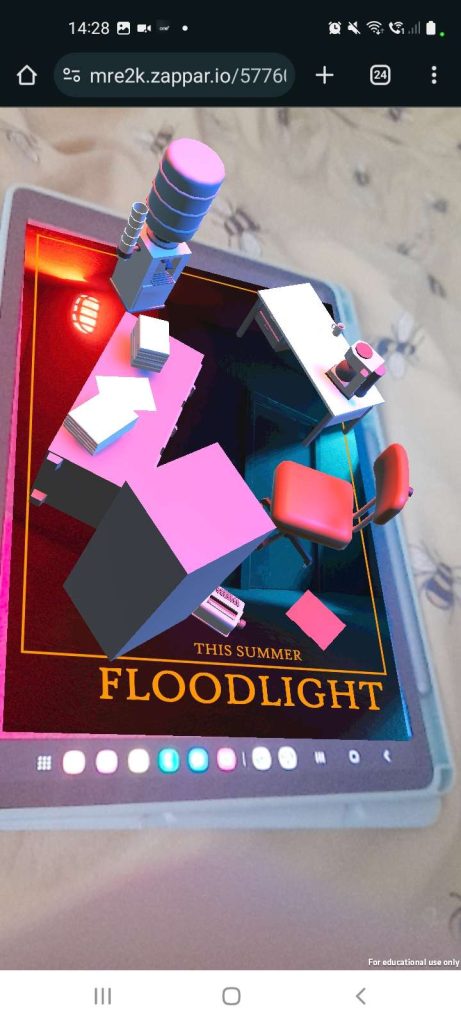

For my own experiment, I decided to use the fake movie posters that I had created for my Level Design project from the year before as the image anchor. There are four, but I chose the one that advertised the VFX project I had also made, named Floodlight. Because the level took place in the same world as the Floodlight short film, I bundled together the assets that I used to create part of that level environment, and changed the lighting of the project to match the movie poster.

After saving the project, I uploaded it to Zapworks, which generated the QR code necessary to activate the movie poster and make the assets appear.

In order for the poster to activate properly, I did have to increase the brightness of my tablet screen. I would need to take into consideration the types of surfaces and images that I would be using when creating experiences for AR, as reflective surfaces can interfere with the image, or, as I found out, images that are too dark might not work with certain cameras when using them as anchors.

If I were to use Zapworks for a wider project, I would consider creating animations to make an advertisement like this more interesting, and make the scene pop out of the image anchor rather than resting on it, since movie posters are vertical not horizontal.

Adobe Aero:

Adobe Aero is an AR application that doesn’t require an image anchor in order to work. Like Zapworks, users can upload their assets and create experiences that people can then view with their device after scanning a QR code. This utilises location data, or it can use the layout of a room to place its assets.

Initially, we used the Adobe Aero app on an iPad, which asked to set up a space on a surface; I chose a desk. Unfortunately, a glitch with the app meant that we had no way of using any provided assets apart from a small robot, though using Aero we could make the robot move across the desk, jump when tapped, and so on.

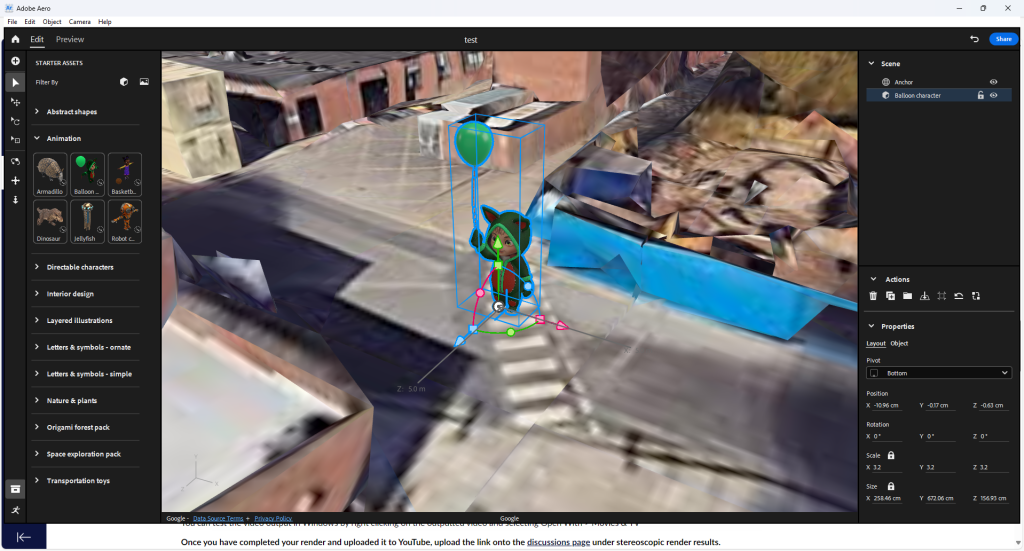

We switched to the experimental Aero application on PC to be able to use more of the assets Adobe provided. With a location scan of the University grounds, we pinpointed the crossing next to the building we were in, and placed an asset on it to appear when we pointed a camera there.

Like Zapworks, Aero provided a QR code, and after scanning it, we were able to see the placeholder asset when we looked through the phone camera.

I didn’t experiment with Aero further, as I prefer Zapworks to create in AR, but only needing a location or a room in order for the experience to trigger in Aero meant that, if I were to use it for a project, I would have far more freedom than Zapworks in terms of where I could set up something interactive in AR.

I would have to consider the location that I chose for an AR experience for both of these applications, as it has been proven that safety and trespassing has been an issue. The amount of assets and animations would also be a consideration, as people have a wide variety of smart devices, and having too many different moving parts or high-poly models could lag or crash an older device.

Concepts:

Technology like this could be used in museums, aquariums, or wildlife parks. These spaces generally have a small board of information next to an exhibit, but this could be turned into a more interactive experience. Visitors could scan a QR code and see a diagram of the exhibit in closer detail, alongside the information that would normally be provided on the board. In museums, the piece itself could be used as the image anchor, allowing text to appear around it to explain certain attributes or the artist’s intentions.

Using it to add a layer of interaction to advertising movie posters could also be an option. Users scan a QR code at the corner of the poster and then look at the poster with their phone, causing a popup of a set piece of the movie or a trailer to show up in real time.

I have personally used QR codes to advertise my portfolio. To make something like this more interesting, scanning the QR code could provide a social media link, and have pieces of the artist’s portfolio appear on a nearby wall or other surface.

References:

Advanced Technology Services, Inc. (2018). Augmented Reality in Maintenance | How is it Helping? | ATS. [online] Available at: https://www.advancedtech.com/blog/augmented-reality-in-maintenance/ [Accessed 1 Nov. 2024].

Dutertre, A. (2023). The ethical challenges of AR/VR. [online] Medium. Available at: https://medium.com/@alex24dutertre/the-ethical-challenges-of-ar-vr-a5333594f909 [Accessed 2 Nov. 2024].

IKEA (2017). Launch of new IKEA place app. [online] IKEA. Available at: https://www.ikea.com/global/en/newsroom/innovation/ikea-launches-ikea-place-a-new-app-that-allows-people-to-virtually-place-furniture-in-their-home-170912/ [Accessed 1 Nov. 2024].

Lardinois, F. (2020). Google Maps gets improved Live View AR directions. [online] TechCrunch. Available at: https://techcrunch.com/2020/10/01/google-maps-gets-improved-live-view-ar-directions/ [Accessed 1 Nov. 2024].

Nguyen, N. (2017). Pokémon Go Has A New, More Realistic Augmented Reality Mode. [online] BuzzFeed News. Available at: https://www.buzzfeednews.com/article/nicolenguyen/how-new-pokemon-go-iphone-ar-mode-works [Accessed 1 Nov. 2024].

Nicolas, S. (2021). 60’s Office Props – Download Free 3D model by SeanNicolas. [online] sketchfab.com. Available at: https://sketchfab.com/3d-models/60s-office-props-dc00ea320cfa4aad90811080270672db [Accessed 5 Jan. 2024].

Ptc.com. (2024). Augmented Reality in Maintenance | PTC. [online] Available at: https://www.ptc.com/en/technologies/augmented-reality/solutions-for-service/maintenance [Accessed 1 Nov. 2024].

Rovira, A., Schieck, A.F.G., Blume, P. and Julier, S. (2020). Guidance and surroundings awareness in outdoor handheld augmented reality. PLoS ONE, [online] 15(3), p.e0230518. doi:https://doi.org/10.1371/journal.pone.0230518.

Warner Norcross + Judd LLP. (2011). Google Maps Shows the Path to Avoiding Liability for User Injuries – Warner Norcross + Judd LLP. [online] Available at: https://www.wnj.com/updates/google-maps-shows-the-path-to-avoiding-liability-for-user-injuries/ [Accessed 1 Nov. 2024].

zap.works. (2011). ZapWorks: Create Your Own Augmented Reality Experiences. [online] Available at: https://zap.works/ [Accessed 17 Oct. 2024].